Political Deepfakes Are Here: What the 2026 Midterms Tell Us

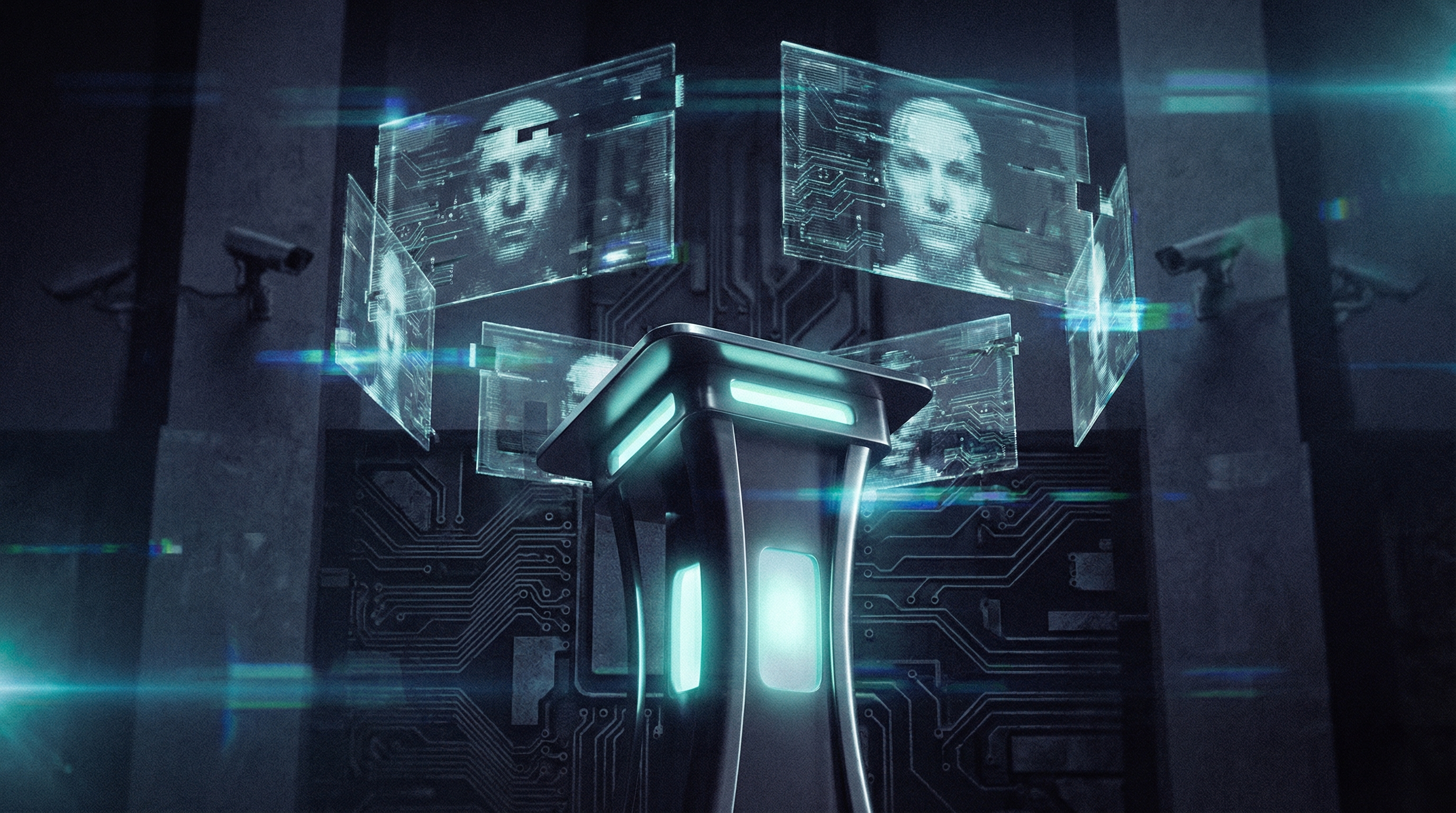

An 85-second AI-generated video put words in a Senate candidate's mouth. It looked real. Most people never noticed the warning label. This is what political deepfakes look like now, and the 2026 midterms have only just begun.

On March 11, 2026, the National Republican Senatorial Committee posted a video to X. At first glance, it showed James Talarico, the newly-minted Democratic nominee for Texas's US Senate seat, reading aloud from his old social media posts. He smiled. He laughed. He seemed almost proud of statements about domestic terrorism and gender identity that he had actually written years ago.

None of it was real. The face, the voice, the performance, all of it was AI-generated. Talarico never sat in front of a camera and said any of those things. Yet the video racked up millions of views, and only a tiny translucent watermark in the bottom corner, on screen for three seconds, said "AI GENERATED."

Welcome to the 2026 midterms.

What Actually Happened With the Talarico Ad

The 85-second video was produced using face-swap and voice-cloning technology. The NRSC took real posts Talarico had published between 2004 and 2021, then generated a synthetic version of him appearing to read and endorse each one enthusiastically. His face is eerily accurate. His voice patterns match. The blazer, the framing, the lighting all look like a legitimate political clip.

The underlying facts in the video were not fabricated. Talarico did write those posts. But what he never did was smile and say "So true" and "I love this one too" while reading them back. The AI manufactured a tone, a context, and an attitude he never expressed. That is where it crosses from political attack ad into something more corrosive.

Robert Weissman, co-president of Public Citizen, called it "a disgrace" and said the NRSC should take it down immediately, adding: "Political deepfakes are a profound threat to our democracy, because there is no adequate defense against them."

The NRSC has used this tactic before. In October 2025, they released a similar video showing a synthetic version of Senate Minority Leader Chuck Schumer appearing to celebrate a government shutdown. Politico reporter Adam Wren noted at the time that deepfake ads have become central to the GOP's campaign approach, not a one-off experiment.

Why the "AI Generated" Label Does Not Work

Both the Talarico ad and the Schumer video carried disclosure labels. Both were designed to be easy to miss. The text was small, faint, and appeared briefly in a corner of the screen. On mobile, where most people scroll through social media, these labels are almost invisible.

This is not an accident. The disclosure satisfies legal minimums in most states while providing almost zero protection to the viewer. As of May 2025, 28 states had enacted laws requiring AI disclosure in political communications, but the specifics vary wildly. Some require the label to be legible and prominently displayed. Others simply require that a disclosure exist somewhere in the content. The NRSC's approach was engineered to stay inside the letter of the law while defeating its purpose entirely.

The Scale of the Problem Heading Into Midterms

The Talarico case is not an outlier. It is part of a documented pattern. Researchers at the Centre for Emerging Technology and Security, writing in late 2025, identified deepfake political ads as one of the highest-priority AI threats to 2026 elections. Their analysis found that synthetic media in political campaigns had moved from experimental to mainstream in under two years.

The Transparency Coalition's 2025 State AI Legislation Report counted 73 new AI-related laws passed across 27 states that year, a sharp increase from prior years. But legislation is reactive by nature. A new law in New York might require clearer AI disclosure on political ads, while the same ad runs freely in Texas under looser rules. Candidates and political committees can still shop for the most permissive jurisdiction.

Meanwhile, the tools to create convincing deepfakes have become cheaper and faster. What took a production team weeks in 2022 can now be done in hours on consumer hardware. The asymmetry between creation and detection is still very much in favor of the attacker.

How to Spot a Political Deepfake

There are specific things to look for when a political video feels off:

- →Unnatural blinking patterns. AI-generated faces often blink too rarely, or blink at odd intervals. Watch for 10 seconds and count the blinks.

- →Lip sync errors at the edges. The inner mouth area and teeth are still hard to render accurately. Look at the corners of the mouth during fast speech.

- →Flat emotional range. Deepfake faces often fail at micro-expressions. The face may look neutral or eerily smooth when the voice conveys strong emotion.

- →Lighting mismatches. Skin tones may shift slightly from frame to frame, or the lighting on the face may not match the background environment.

- →Source verification. Ask where the video came from. If a clip of a politician saying something inflammatory surfaced on an anonymous account with no original broadcast source, that is a red flag regardless of how real it looks.

What Actually Needs to Change

Disclosure labels placed by the people who made the deepfake are not a real solution. The NRSC proved that. What works is independent, automated detection at the platform level, before content goes viral, not after. Several major platforms have announced commitments to label AI content in political ads, but enforcement has been inconsistent and the penalties for non-compliance remain minimal.

The more durable answer is building detection into the tools voters already use. When you encounter a video that makes you feel angry, shocked, or primed to share, that emotional spike is exactly the moment to pause and check. That is not paranoia. It is the same critical instinct that journalism schools have taught for decades, now applied to video.

Before sharing any political video this election season, run it through a detection tool. The 30 seconds it takes could be the difference between spreading a deepfake and stopping one. FakeOut is free on Android, with iOS beta in development, and lets you check images and videos for AI manipulation in seconds.

The Talarico ad will not be the last deepfake political video of 2026. It will probably not even be remembered as the worst one by November. But it is useful as a case study, because it was brazen enough to document clearly, and subtle enough to fool millions of casual viewers. That combination is the whole problem. And the answer to it is not more small-print labels. It is a more skeptical, better-equipped public.