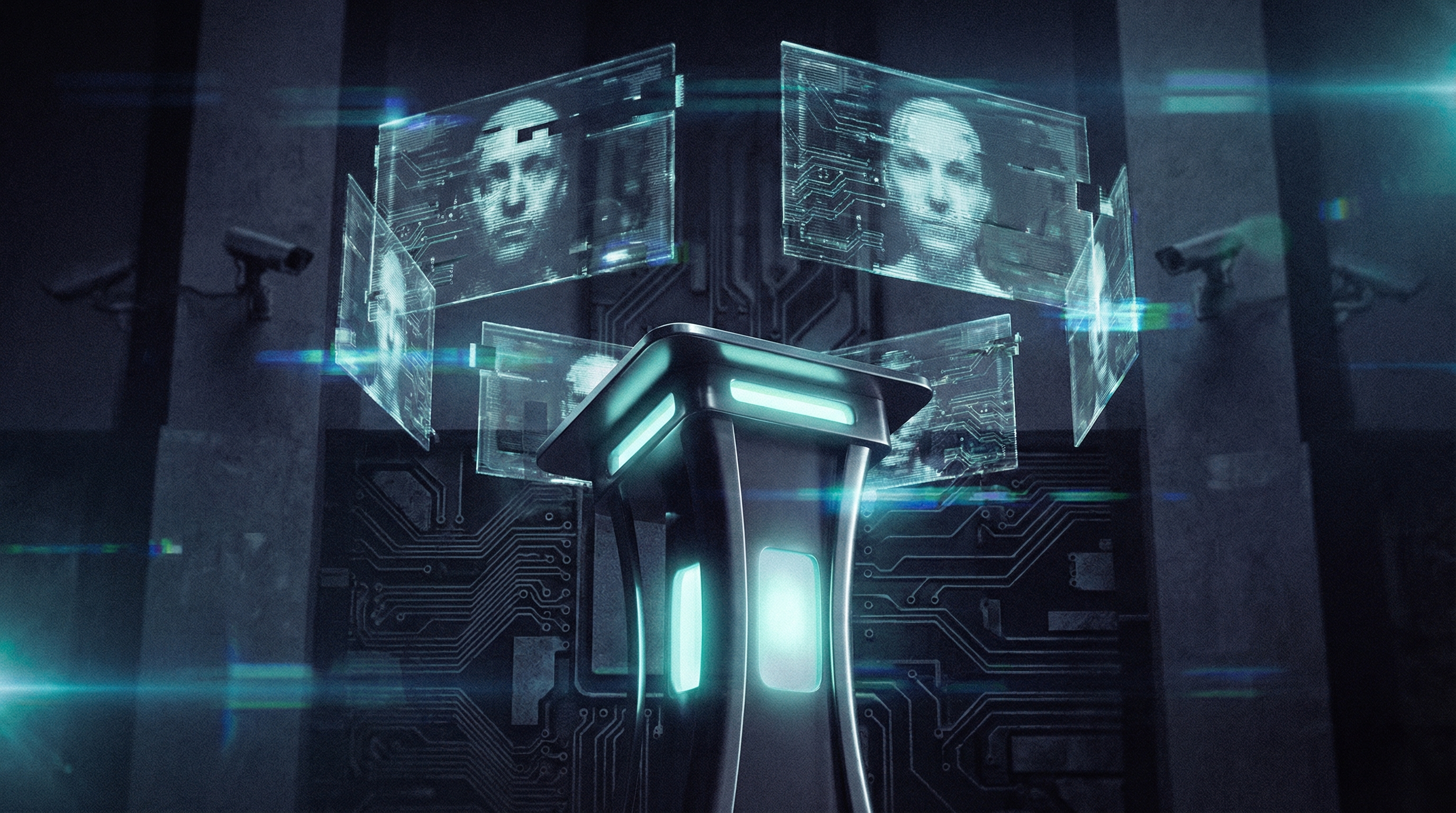

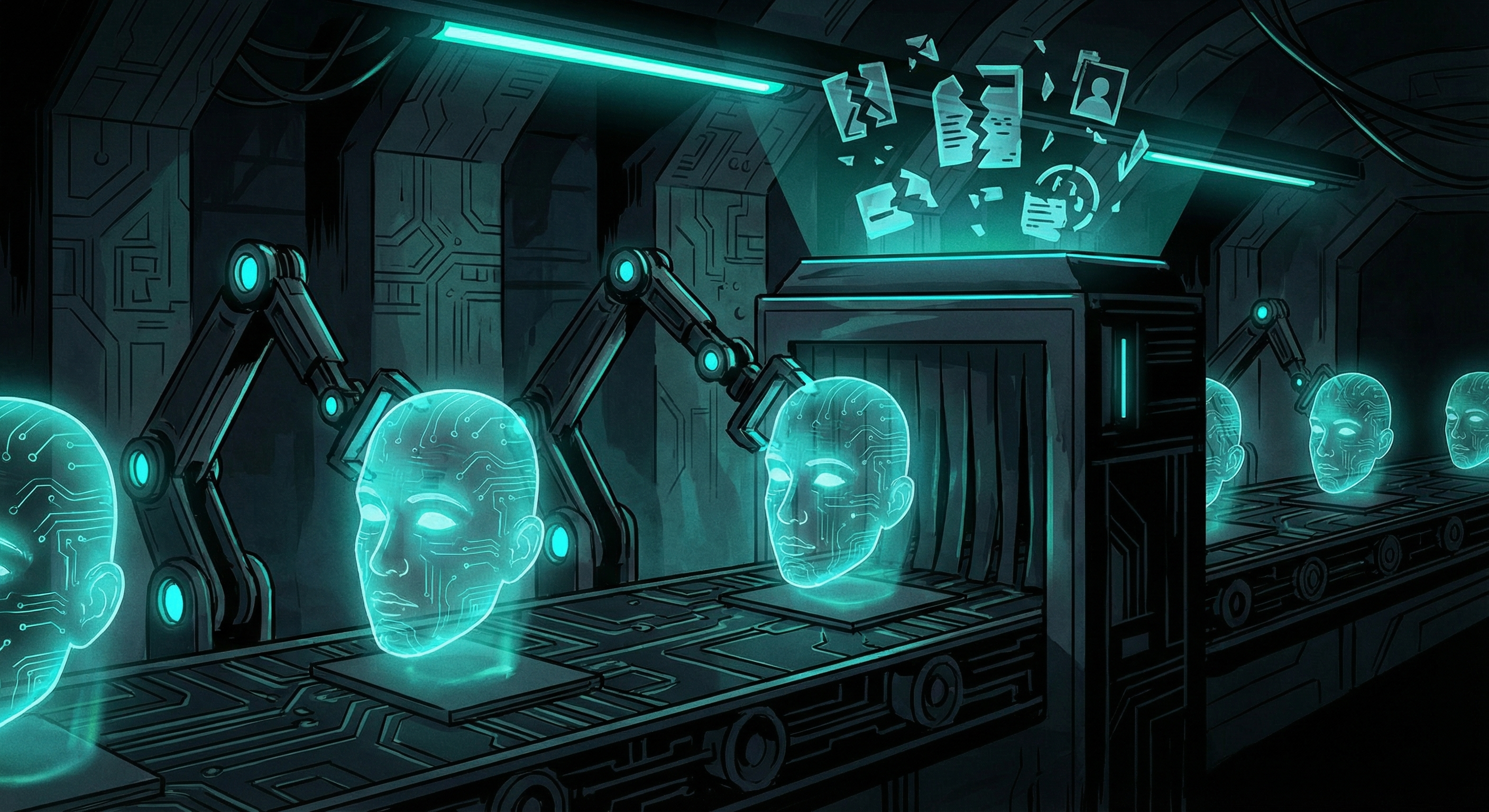

What Are Content Credentials? The AI Watermarking Standard Coming to Your Feed in 2026

C2PA content credentials promise to label AI-generated images. But Instagram and X strip them out. Here's what that means before the EU AI Act's August 2026 deadline.